Abstract

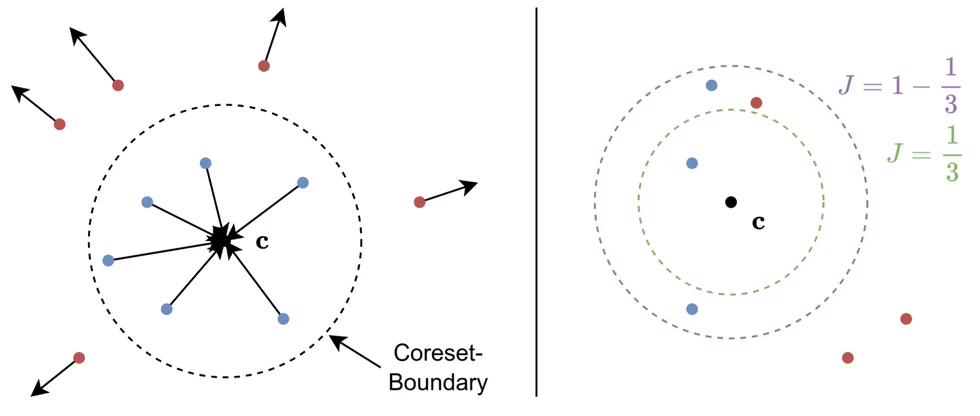

The goal of coreset selection methods is to identify representative subsets of datasets for efficient model training. Yet, existing methods often ignore the possibility of annotation errors and require fixed pruning ratios, making them impractical in real-world settings. We present HyperCore, a robust and adaptive coreset selection framework designed explicitly for noisy environments. HyperCore leverages lightweight hypersphere models learned per class, embedding in-class samples close to a hypersphere center while naturally segregating out-of-class samples based on their distance. By using Youden's J statistic, HyperCore can adaptively select pruning thresholds, enabling automatic, noise-aware data pruning without hyperparameter tuning. Our experiments reveal that HyperCore consistently surpasses state-of-the-art coreset selection methods, especially under noisy and low-data regimes. HyperCore effectively discards mislabeled and ambiguous points, yielding compact yet highly informative subsets suitable for scalable and noise-free learning.

For more information, see the paper pdf.

Citation

If you use this work, please cite our paper:

@misc{moser2025hypercore,

title = {HyperCore: Coreset Selection under Noise via Hypersphere Models},

author = {Brian B. Moser and Arundhati S. Shanbhag and Tobias C. Nauen and

Stanislav Frolov and Federico Raue and Joachim Folz and Andreas

Dengel},

year = {2025},

eprint = {2509.21746},

archivePrefix = {arXiv},

primaryClass = {cs.LG},

url = {https://arxiv.org/abs/2509.21746},

note = {Accepted to ICPR 2026},

}