When Pretty Isn't Useful: Investigating Why Modern Text-to-Image Models Fail as Reliable Training Data Generators

arXiv

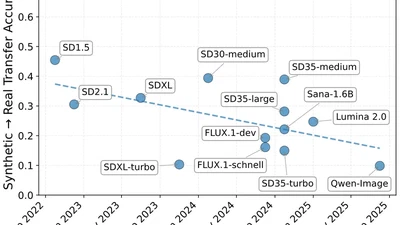

We show that newer text-to-image models are progressively worse as training data generators, despite better visual quality, because they collapse to a narrow aesthetic-centric distribution that diverges from real data.

krzysztof-adamkiewicz