TaylorShift: Shifting the Complexity of Self-Attention from Squared to Linear (and Back) using Taylor-Softmax

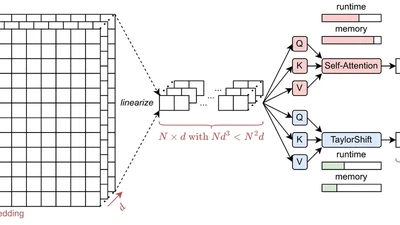

Oral presentation at ICPR 2024 introducing TaylorShift, a novel reformulation of the attention mechanism using Taylor-Softmax that enables full token-to-token interactions in linear time.