TextTeacher: What Can Language Teach About Images?

Introduction

What if a language model could teach a vision model — and then step aside?

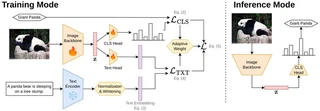

TextTeacher adds a frozen text encoder and a lightweight alignment loss to standard image classification training. Image captions provide per-instance semantic targets that shape the visual representation during training. At inference, everything text-related is discarded. The deployed model is a plain, fast vision network — no extra parameters, no added latency.

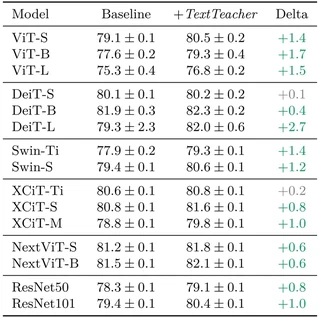

The result: Up to +2.7 p.p. on ImageNet, +1.0 p.p. average transfer gain across six fine-grained benchmarks, and +8.4 p.p. under 50% label noise — all with negligible compute overhead and without any multimodal pretraining of the target model. TextTeacher distills knowledge 33% more efficient than traditional vision-distillation.

How it works

A lightweight text head projects the backbone’s CLS token into the embedding space of a frozen text encoder. An auxiliary contrastive loss aligns each image’s projection with the text embedding of its caption, pulling image features toward a pre-organised semantic manifold. The text head and encoder are dropped after training.

Results

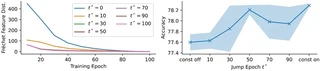

TextTeacher on ImageNet

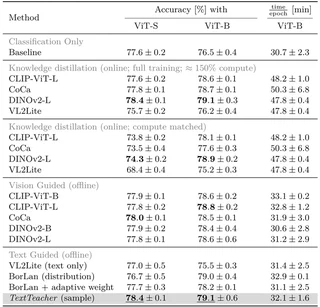

Comparison of Knowledge Distillation Methods

TextTeacher runs at nearly the same per-epoch cost as baseline classification (32 min/epoch vs. 31 min/epoch), while online knowledge distillation from DINOv2-L costs ~48 min/epoch. In a compute-matched setting, TextTeacher (79.1%) matches DINOv2-L online distillation trained for the full 100 epochs (79.1%) at only ~66% the wall-clock time. Adding the full preprocessing (captioning + embedding the training set) still saves ≈6 GPU-hours over 300-epoch online distillation.

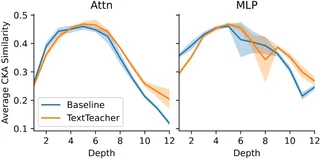

Analysis: How does TextTeacher work?

Thus, TextTeacher acts primarily as an early-phase preconditioner: most of the benefit is realised in the first 30–50 epochs, where it accelerates the formation of semantically organised features — especially in deeper layers.

Contributions

- We pose and investigate a fundamental question: Can the semantic knowledge of a language model efficiently improve a vision model? — operationalised via a decoupled auxiliary signal from a frozen text encoder.

- We introduce TextTeacher, a general approach that efficiently injects textual knowledge into standard vision backbones using an auxiliary alignment loss, requiring no text at inference.

- We provide extensive experiments showing improvements in accuracy and transfer, and demonstrate that language-based guidance outperforms visual guidance and knowledge distillation in a compute-matched setting.

Citation

If you use this work, please cite our paper:

@inproceedings{Nauen2026TextTeacher,

author = {Nauen, Tobias Christian and Frolov, Stanislav and Moser, Brian B.

and Raue, Federico and Anwar, Ahmed and Dengel, Andreas},

title = {TextTeacher: What Can Language Teach About Images?},

month = {Mai},

year = {2026},

}