Which Transformer to Favor: A Comparative Analysis of Efficiency in Vision Transformers

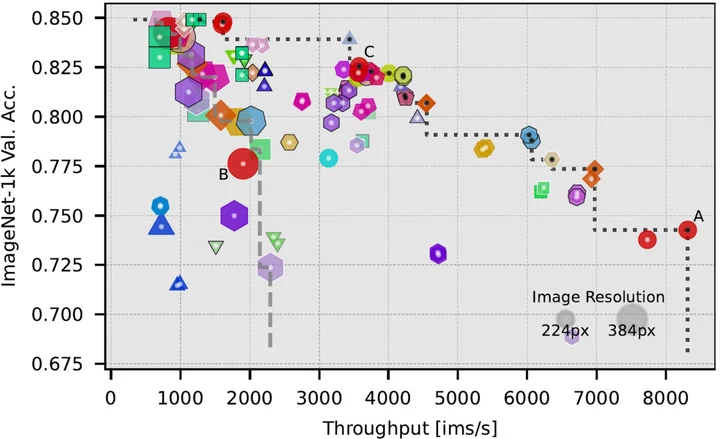

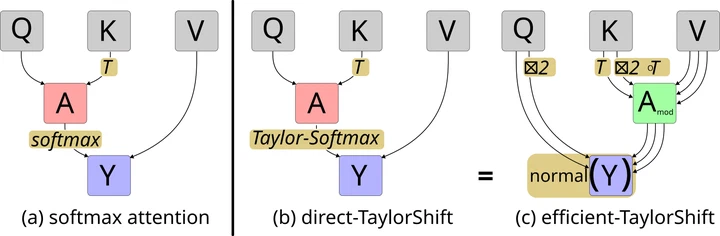

A comprehensive benchmark and analysis of more than 45 transformer models for image classification to evaluate their efficiency, considering various performance metrics. We find the optimal architectures to use and uncover that model-scaling is more efficient than image scaling.