TextTeacher: What Can Language Teach About Images?

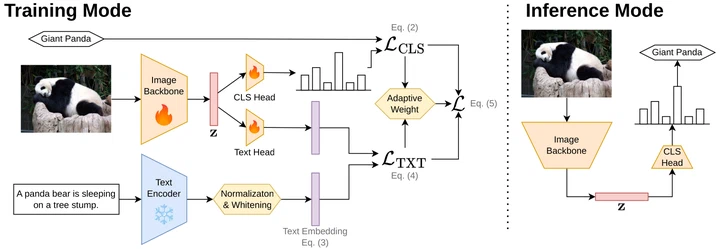

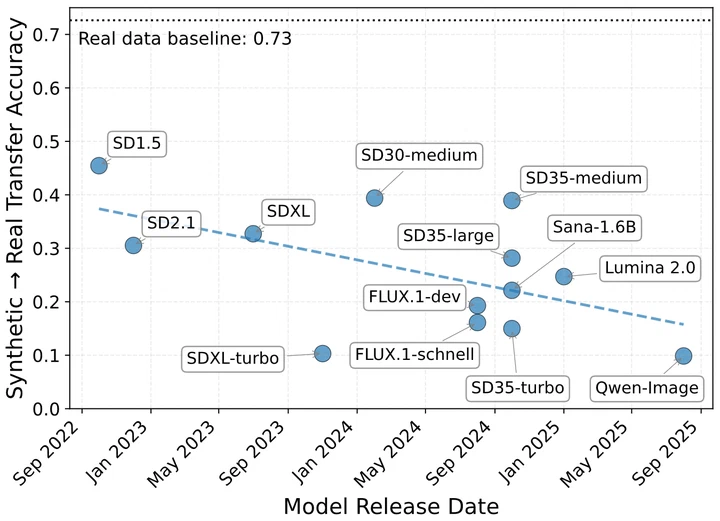

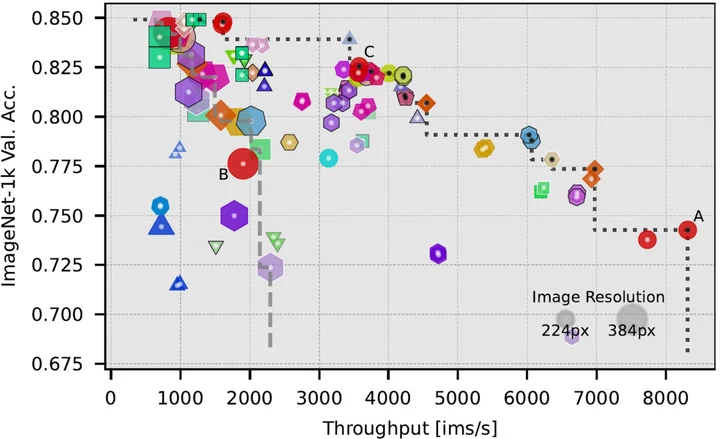

We use a frozen text encoder on image captions as a lightweight training-time auxiliary objective for image classifiers. The text components are drop.p.ed at inference, leaving a fast, unimodal vision model. Accuracy on ImageNet improves by up to +2.7 p.p. and downstream transfer by +1.0 p.p. on average, outperforming vision knowledge distillation at a fraction of the compute.